1、添加windows的Hadoop依赖

1.1、将安装包解压出来

1.2、将复制文件路径

添加环境变量

HADOOP_HOME

然后在PATH里添加

%HADOOP_HOME%\bin

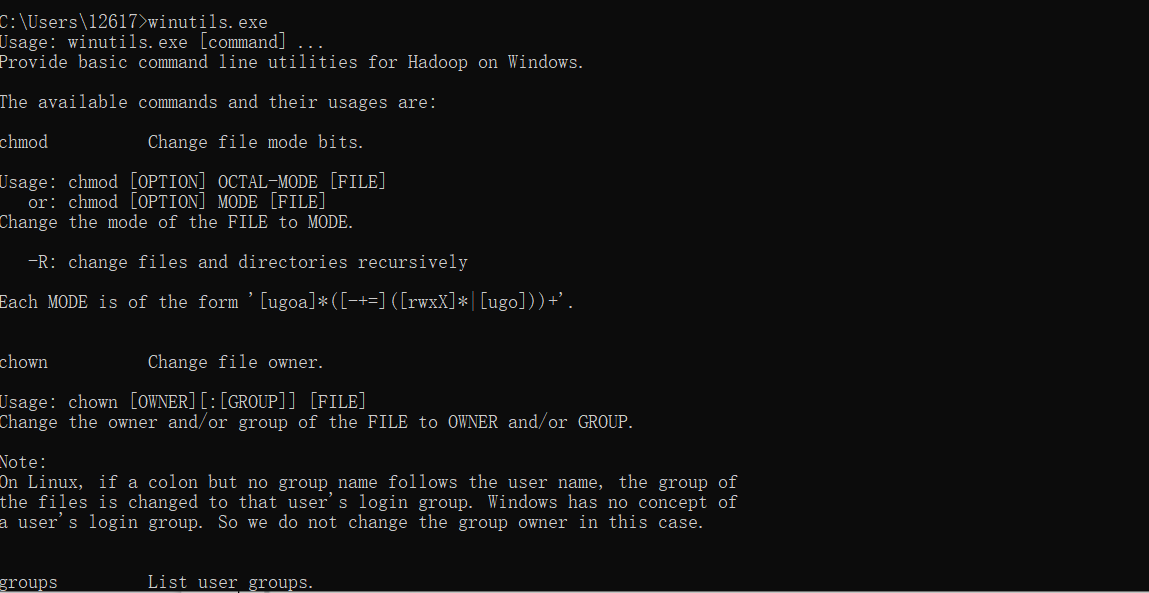

1.3、测试

出现以下画面就是成功了

2、修改window10的主机映射文件(hosts文件)

(a)进入C:\Windows\System32\drivers\etc路径

(b)拷贝hosts文件到桌面

(c)打开桌面hosts文件并添加如下内容

192.168.1.100 hadoop100

192.168.1.101 hadoop101

192.168.1.102 hadoop102

192.168.1.103 hadoop103

192.168.1.104 hadoop104

192.168.1.105 hadoop105

192.168.1.106 hadoop106

192.168.1.107 hadoop107

192.168.1.108 hadoop108

(d)将桌面hosts文件覆盖C:\Windows\System32\drivers\etc路径hosts文件

3、开发hadoop客户端(java)

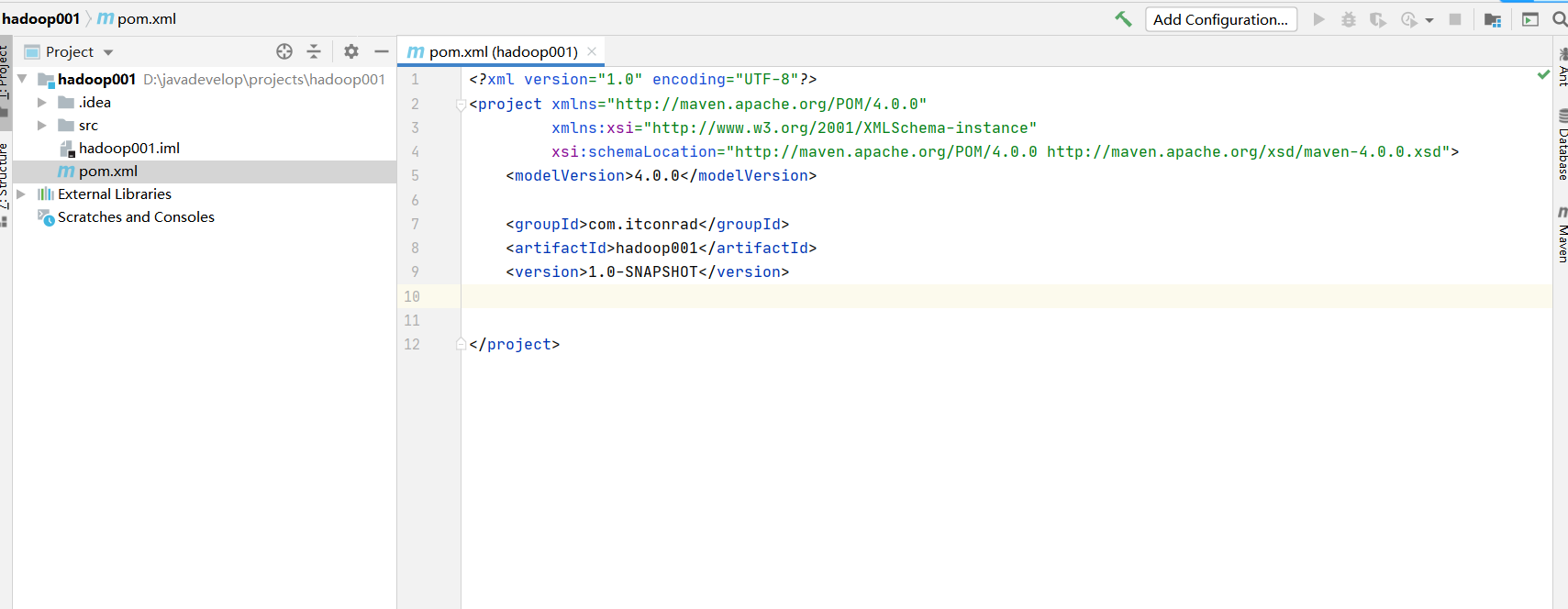

3.1、创建maven工程

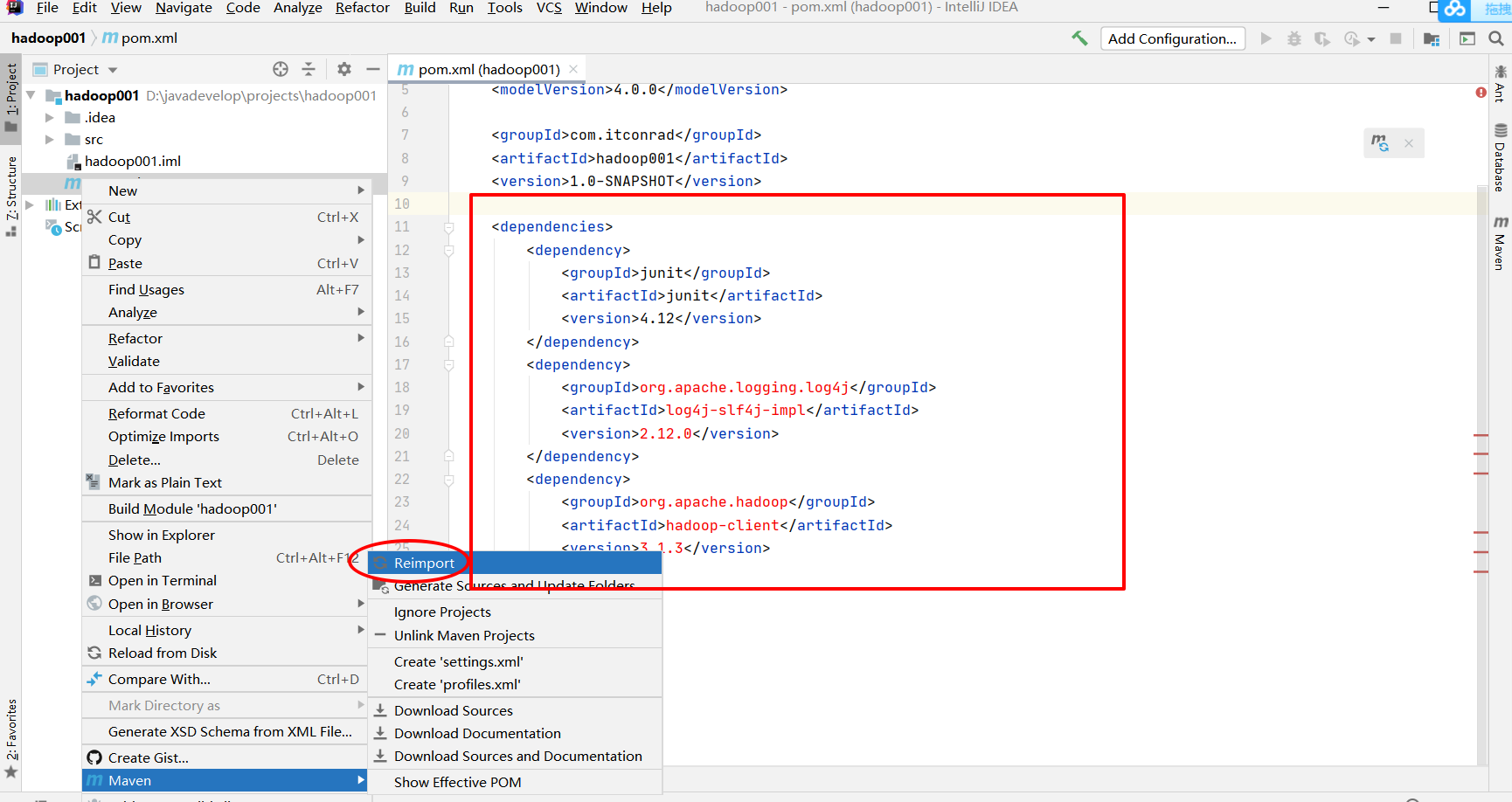

3.2、在pom.xml中添加依赖

依赖如下:

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-slf4j-impl</artifactId>

<version>2.12.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

</dependencies>

3.3、在项目的src/main/resources目录下,新建一个文件,命名为“log4j2.xml”,在文件中填入

<?xml version="1.0" encoding="UTF-8"?>

<Configuration status="error" strict="true" name="XMLConfig">

<Appenders>

<!-- 类型名为Console,名称为必须属性 -->

<Appender type="Console" name="STDOUT">

<!-- 布局为PatternLayout的方式,

输出样式为[INFO] [2018-01-22 17:34:01][org.test.Console]I'm here -->

<Layout type="PatternLayout"

pattern="[%p] [%d{yyyy-MM-dd HH:mm:ss}][%c{10}]%m%n" />

</Appender>

</Appenders>

<Loggers>

<!-- 可加性为false -->

<Logger name="test" level="info" additivity="false">

<AppenderRef ref="STDOUT" />

</Logger>

<!-- root loggerConfig设置 -->

<Root level="info">

<AppenderRef ref="STDOUT" />

</Root>

</Loggers>

</Configuration>

4、使用javaApi连接hadoop

package com.itconrad.hadoop.client;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import org.apache.hadoop.io.IOUtils;

import org.junit.After;

import org.junit.Before;

import org.junit.Test;

import java.io.IOException;

import java.net.URI;

public class HadoopClient {

FileSystem fileSystem;

// configuration 设置集群的配置参数

Configuration configuration = new Configuration();

@Before

public void before() throws IOException, InterruptedException {

// 1,新建HDFS对象

fileSystem = FileSystem.get(

URI.create("hdfs://hadoop102:8020"),

configuration,

"itconrad"

);

}

// 2,操作集群

/**

* 下载 : 将集群上的文件下载到本地

* @throws IOException

* @throws InterruptedException

*/

@Test

public void get() throws IOException, InterruptedException {

fileSystem.copyToLocalFile(

new Path("/hadoop_test_files01.txt"),

new Path("D:\\javadevelop\\projects\\test_files\\hadoop_copyToLocal.txt")

);

}

/**

* 上传:从本地上传到集群上

* @throws IOException

*/

@Test

public void put() throws IOException {

fileSystem.copyFromLocalFile(

new Path("C:\\Users\\12617\\Desktop\\mysql_linux"),

new Path("/")

);

}

/**

* 追加:追加字符串到集群上的文件上

* @throws IOException

*/

@Test

public void appent() throws IOException {

FSDataOutputStream append;

append=fileSystem.append(

new Path("/hadoop_test_files02.txt")

);

append.write("append test".getBytes());

IOUtils.closeStream(append);

}

/**

* 查看

* @throws IOException

*/

@Test

public void ls() throws IOException {

FileStatus[] fileStatuses = fileSystem.listStatus(new Path("/"));

for (FileStatus fileStatus : fileStatuses) {

System.out.println(fileStatus.getPath());

System.out.println(fileStatus.getOwner());

System.out.println(fileStatus.getBlockSize());

System.out.println("====================" + fileStatus.getAccessTime() + "===================");

}

}

/**

* 删除

* @throws IOException

*/

@Test

public void del() throws IOException {

fileSystem.delete(

new Path("/hadoop_test_files01.txt"),

true

);

}

/**

* 查看文件夹

* @throws IOException

*/

@Test

public void lf() throws IOException {

RemoteIterator<LocatedFileStatus> statusRemoteIterator = fileSystem.listFiles(

new Path("/mysql_linux"),

true

);

while (statusRemoteIterator.hasNext()){

LocatedFileStatus locatedFileStatus = statusRemoteIterator.next();

System.out.println(locatedFileStatus.getPath());

BlockLocation[] blockLocations = locatedFileStatus.getBlockLocations();

for (int i = 0; i < blockLocations.length; i++) {

System.out.println("第"+i+"块");

String[] hosts = blockLocations[i].getHosts();

for (String host : hosts) {

System.out.print(host+" ");

}

System.out.println("");

}

System.out.println("=======================================");

}

}

/**

* 移动文件,或者改名

* @throws IOException

*/

@Test

public void mv() throws IOException {

fileSystem.rename(

new Path("/mysql_linux/mysql.zip"),

new Path("/")

);

}

@After

public void after() throws IOException {

// 3,关闭连接

fileSystem.close();

}

5、声明

(1)可忽略用户名,因为有多套集群,截图可能存在用户名不一致的情况,用户可选用root,这样可以确保权限,如果是甲方有要求使用其他用户,可以创建一个有sudo权限的用户

(2)环境:Centos7,java1.8,hadoop3.1.3,内存4G,硬盘50G,安装必要环境(可见第一篇)

(3)小编是尚硅谷学生,文章可能会多次引用尚硅谷的教材。